Sensory Substitution: How the Brain Turns Touch Into Vision

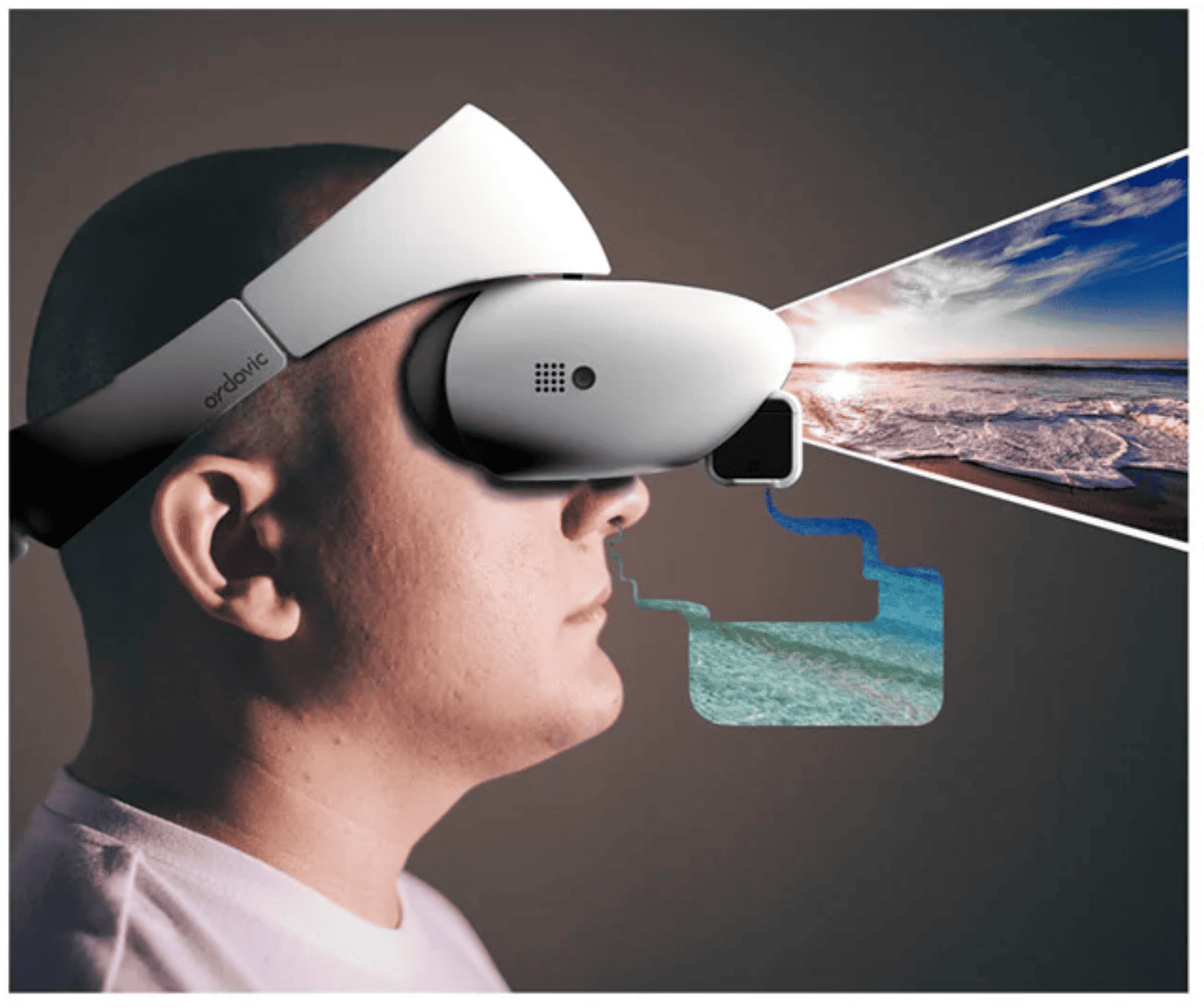

What will happen when the brain experiences the wrong sense? An experience or a possibility where touch might become vision and sound might become shape. It is difficult to imagine such a possibility, right? Another core concept, the Sensory Reality Swaps, is often treated as science fiction.

To understand the logic behind this concept, let's first know that the human brain is not hard-wired to a fixed sensory map.

Most sensory processes, like vision, hearing, and touch, are processed in specialized regions. But the brain’s internal architecture remains remarkably flexible. When one of the sensory channels is lost, weakened, or artificially rerouted due to an accident, injury or other reason, neural circuits can reorganize with surprising precision, and Sensory Reality Swaps occur.

Neural circuit reorganization is possible due to concepts such as cortical plasticity, topographic remapping, and sensory substitution. Therefore, this capacity of the brain shows an amazing future possibility where touch becomes vision, or sound becomes shape. We will read to know more about Sensory Reality Swaps.

Neuroscience Behind Sensory Reality Swaps:

Neural principles that describe how the brain reorganizes, reassigns, or reinterprets incoming information define the mechanism behind sensory reality swaps.

The core mechanisms are sensory substitution, cross-modal plasticity, cortical remapping, and predictive coding.

Cross-Modal Plasticity:

The brain’s natural flexibility is known as cross-modal plasticity, and the cortex is organized by function, not by fixed sensory identity. The brain reallocates this unused neural territory if one sensory channel weakens or is artificially rerouted.

Some of the examples are

- In individuals with congenital blindness (severe vision loss or complete absence of vision present from birth), the occipital cortex responds to tactile reading (Braille) and sound patterns.

- In a condition called congenital deafness (severe hearing loss or complete absence of hearing present from birth), auditory cortex regions process peripheral visual motion.

The two above examples suggest that the cortex can process any structured input if the input is delivered in an interpretable pattern.

How does this help for sensory swaps?

The visual cortex can learn to interpret it as “vision-like” information if touch data is encoded as spatial frequency or intensity patterns.

Sensory Substitution Devices (SSDs):

Primitive versions of reality swaps have already been tested by neuroscientists:

- The voice system: It converts the vision into sound; a camera converts video frames into soundscapes. Over a period of time, users will learn to “see” with their ears. This way the brain learns to interpret new codes. During auditory input, fMRI data showed visual cortex activation.

- Brain Port: It plans to enable vision via tongue touch, where a small electrode array delivers patterns of electrical pulses on the tongue that correspond to visual scenes.

Despite the sensory data arriving via touch, experiments have shown recruitment of visual cortical areas.

The brain cares more about information structure than the sensory channel; these studies confirm.

Predictive Coding:

Through predictive coding, the brain learns the rules of the new sensory language because the brain constantly builds internal models of the world.

It starts predicting patterns when exposed to a new sensory code. For example, “vertical lines feel like vertical vibration”:

- The incoming data is encoded.

- Prediction error is minimized by the brain.

- The internal model stabilizes over repeated exposure.

Not simply raw stimulus detection, this enables a person to experience something closer to perception.

Cortical Remapping and Hebbian Learning:

The synaptic connections are strengthened by repeated pairing of a new stimulus with a perceptual interpretation.

“Cells that fire together wire together.”

For instance:

If bright light corresponds to a high-frequency vibration, the neuronal assemblies representing vibration will repeatedly co-activate with assemblies representing brightness. After repetitive activation, the mapping becomes automatic and unconscious.

The Role of Multisensory Areas:

Several brain regions act as “cross-modal translators and are known as neuroprosthetic integration:

- Superior Colliculus: The SC integrates visual, auditory, and tactile information to construct a spatial map.

- Posterior Parietal Cortex: It links body-centered space with external sensory cues. It is also important for interpreting swapped sensory data as spatial information.

- Superior Temporal Sulcus: Processes complex multisensory correlations, and these areas help make swapped signals feel natural rather than artificial (multisensory integration). However, this is because they specialize in merging sensory information into a coherent percept.

Neural Coding Strategies for Reality Swaps :

The information must be encoded in a format the brain can learn to turn one sense into another:

- Spatial Coding: Helps the touch patterns on the skin map to shapes or locations.

- Temporal Coding: Changing the sound frequency, changes the visual brightness

- Intensity Coding: Translates the vibration amplitude to equal object size or distance.

These formats are interpreted by the brain similarly to how it interprets motion, visual contrast, and edges.

Long-Term Plasticity:

Due to long-term plasticity, perception becomes a habit; with training, the substituted sensory input is no longer consciously analyzed. The users report:

- Seeing shapes with sound.

- Feeling motion with light.

- Hearing depth from tactile pulses.

This shows how perception is not inherent to any specific organ, but it is rather constructed by the brain.

To Summarize:

Why Are Sensory Reality Swaps Neuroscientifically Possible?

- Cortex is modality-agnostic, meaning it can work with any type of sensory input, not just the one it usually handles.

- When one sense is lost or changed, neural plasticity allows the brain to reassign jobs

- The brain learns new sensory languages or learns new types of signals through predictive coding.

- Multisensory brain areas mix information from many senses, so they can handle swapped inputs easily.

- Hebbian learning is when the neurons that fire together form stronger connections, making new mappings stable

- Real devices have already been demonstrated that let people “see” through touch or “see” through sound.

These are the neuroscientific foundations that make science-fiction concepts like sensory swaps feel scientifically sound. In short, the brain constructs perception.

FAQs: Sensory Reality Swaps

1. What are sensory reality swaps?

Techniques that reroute information from one sense into another. For example, visual patterns can be translated into sounds or tactile stimuli so the brain learns to “see” through hearing or touch.

2. How does the brain make sense of swapped inputs?

To interpret the new signals, the brain uses neural plasticity, its ability to reorganize. The brain treats these signals as meaningful sensory information over time.

3. Does the cortex really accept any type of input?

Yes, the cortex accepts inputs. As long as the patterns are consistent and structured, many cortical areas are flexible and can process information regardless of the original sensory channel.

4. Who can benefit from these sensory reality swaps?

Patients suffering with blindness, low vision, hearing loss, or sensory impairments will benefit; they can gain new ways to navigate, recognize objects, or perceive their surroundings.

5. What devices help perform these swaps?

For instance, devices include tactile displays that convert visual images into touch patterns and auditory devices that convert visual scenes into soundscapes.

6. How long does it take for the brain to adapt?

The time taken depends; some people grasp basic patterns within days, may take weeks to months of consistent use, while deeper interpretation, like recognizing objects.

7. Is swapping the same as restoring the lost sense?

Swapping is not the same as restoring; it does not recreate the original sensory experience. Instead, it offers an alternative pathway that provides functional information.

8. Why do these swaps work at all?

The swaps work because the brain is fundamentally predictive and pattern driven. Through repeated exposure, it learns the new “language” and strengthens useful connections.

9. Are these technologies safe?

The devices are considered safe and noninvasive. There are experimental invasive methods that exist but are used only in controlled clinical settings.

10. Will future brain-machine interfaces make sensory swaps more natural?

Yes, there are advances in neuroprosthetics, AI decoding, and direct cortical stimulation that are moving toward smoother, faster, and more intuitive sensory substitution systems.

Further Reading List:

If you are curious to know how the brain constructs reality, imagination, and perception beyond words and senses, you may enjoy this reflective work: Beneath the Stars and Beyond.